CUDA-Dockerized Implementation of Hybrid (Generative and Retrieval) Based Conversational ChatBot Model in TensorFlow.

The current results are pretty lousy:

hello - hello

how old are you ? - twenty .

i am lonely - i am not

nice - you ' re not going to be okay .

so rude - i ' m sorry .

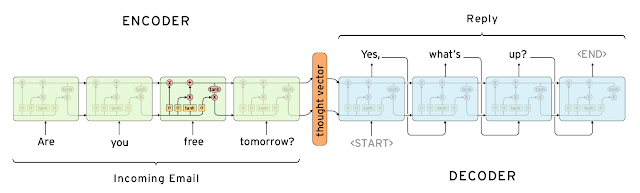

picture

Curtesy of this article.

Setup

git clone [email protected]:AhmedAbdalazeem/ChatBot.git

cd tf_seq2seq_chatbot

bash setup.sh

Run

Train a seq2seq model on a small (17 MB) corpus of movie subtitles:

python train.py

(this command will run the training on a CPU... GPU instructions are coming)

Test trained trained model on a set of common questions:

python test.py

Chat with trained model in console:

python chat.py

All configuration params are stored at tf_seq2seq_chatbot/configs/config.py

GPU usage

If you are lucky to have a proper gpu configuration for tensorflow already, this should do the job:

python train.py

Otherwise you may need to build tensorflow from source and run the code as follows:

cd tensorflow # cd to the tensorflow source folder

cp -r ~/tf_seq2seq_chatbot ./ # copy project's code to tensorflow root

bazel build -c opt --config=cuda tf_seq2seq_chatbot:train # build with gpu-enable option

./bazel-bin/tf_seq2seq_chatbot/train # run the built code

Requirements

References