Spoken Language Understanding (SLU) is one of the core components of a task-oriented dialogue system, which aims to extract the semantic meaning of user queries (e.g., intents and slots).

In this work, we introduce OpenSLU, an open-source toolkit to provide a unified, modularized, and extensible toolkit for spoken language understanding. Specifically, OpenSLU unifies 10 SLU baselines for both single-intent and multi-intent scenarios, which support both non-pretrained and pretrained models simultaneously. Additionally, OpenSLU is highly modularized and extensible by decomposing the model architecture, inference, and learning process into reusable modules, which allows researchers to quickly set up SLU experiments with highly flexible configurations. We hope OpenSLU can help researcher to quickly initiate experiments and spur more breakthroughs in SLU.

If you find this project useful for your research, please consider citing the following paper:

@misc{qin2023openslu,

title={OpenSLU: A Unified, Modularized, and Extensible Toolkit for Spoken Language Understanding},

author={Libo Qin and Qiguang Chen and Xiao Xu and Yunlong Feng and Wanxiang Che},

year={2023},

eprint={2305.10231},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

OpenSLU requires Python>=3.8, and torch>=1.12.0.

git clone https://github.com/LightChen233/OpenSLU.git && cd OpenSLU/

pip install -r requirements.txtroot

├── common

│ ├── config.py # load configuration and auto preprocess ignored config

│ ├── global_pool.py # global variable pool, you can use set_value() to add variable into pool and get_value() to get variable from pool.

│ ├── loader.py # load data from hugging face

│ ├── logger.py # log predict result, support [fitlog], [wandb], [local logging]

│ ├── metric.py # evalutation metric, support [intent acc], [slot F1], [EMA]

│ ├── model_manager.py # help to prepare data, prebuild training progress.

│ ├── saver.py # help to manage to save model, checkpoint etc. to disk.

│ ├── tokenizer.py # tokenizer also support no-pretrained model for word tokenizer.

│ └── utils.py # canonical model communication data structure and other common tool function

├── config

│ ├── reproduction # configurations for reproducted SLU model.

│ └── **.yaml # configuration for SLU model.

├── logs # local log storage dir path.

├── model

│ ├── encoder

│ │ ├── base_encoder.py # base encoder model. All implemented encoder models need to inherit the BaseEncoder class

│ │ ├── auto_encoder.py # auto-encoder to autoload provided encoder model

│ │ ├── non_pretrained_encoder.py # all common-used no pretrained encoder like lstm, lstm+self-attention

│ │ └── pretrained_encoder.py # all common-used pretrained encoder, implemented by hugging-face [AutoModel].

│ ├── decoder

│ │ ├── interaction

│ │ │ ├── base_interaction.py # base interaction model. All implemented encoder models need to inherit the BaseInteraction class

│ │ │ └── *_interaction.py # some SOTA SLU interaction module. You can easily reuse or rewrite to implement your own idea.

│ │ ├── base_decoder.py # decoder class, [BaseDecoder] support classification after interaction, also you can rewrite for your own interaction order

│ │ └── classifier.py # classifier class, support linear and LSTM classification. Also support token-level intent.

│ └── open_slu_model.py # the general model class, can automatically build the model through configuration.

├── save # model checkpoint storage dir path and dir to automatically save glove embedding.

├── tools # some callable tools

│ ├── load_from_hugging_face.py # apis to help load checkpoint to reproduction from hugging face.

│ ├── parse_to_hugging_face.py # help to convert checkpoint to hugging face needed format.

│ └── visualization.py # help to visualize prediction error.

├── app.py # help to deploy model in hugging face space.

└── run.py # run script for all function.Example for reproduction of slot-gated model:

python run.py --dataset atis --model slot-gated NOTE: The configuration files of these models are placed in

config/reproducion/*.

- First, you can freely combine and build your own model through config files. For details, see Configuration.

- Then, you can assign the configuration path to train your own model.

Example for stack-propagation fine-tuning:

python run.py -cp config/stack-propagation.yamlExample for multi-GPU fine-tuning:

accelerate config

accelerate launch run.py -cp config/stack-propagation.yamlOr you can assign accelerate yaml configuration.

accelerate launch [--config_file ./accelerate/config.yaml] run.py -cp config/stack-propagation.yamlIn OpenSLU, you are only needed to rewrite required commponents and assign them in configuration instead of rewriting all commponents.

In most cases, rewriting Interaction module is enough for building a new SLU model.

This module accepts HiddenData as input and return with HiddenData, which contains the hidden_states for intent and slot, and other helpful information. The example is as follows:

class NewInteraction(BaseInteraction):

def __init__(self, **config):

self.config = config

...

def forward(self, hiddens: HiddenData):

...

intent, slot = self.func(hiddens)

hiddens.update_slot_hidden_state(slot)

hiddens.update_intent_hidden_state(intent)

return hiddensTo further meet the

needs of complex exploration, we provide the

BaseDecoder class, and the user can simply override the forward() function in class, which accepts HiddenData as input and OutputData as output. The example is as follows:

class NewDecoder(BaseDecoder):

def __init__(self,

intent_classifier,

slot_classifier,

interaction=None):

...

self.int_cls = intent_classifier

self.slot_cls = slot_classifier

self.interaction = interaction

def forward(self, hiddens: HiddenData):

...

interact = self.interaction(hiddens)

slot = self.slot_cls(interact.slot)

intent = self.int_cls(interact.intent)

return OutputData(intent, slot)NOTE: We have set "logger" to global_pool, user can use global_pool.get_value("logger") to get logger module, and call any interface for logger anywhere.

OpenSLU require the input file to be in the .jsonl format, with each line representing a JSON object. The format should adhere to the following structure:

{

"text": ["A", "model", "bilayer", "can", "be", "made", "with", "either", "synthetic", "or", "natural", "lipids", "."],

"intent": "XXX",

"slot": ["O", "B-X", "I-X", "O", "O", "O", "O", "O", "O", "O", "O", "O", "O"]

}After that, you will need to specify the corresponding data path at dataset configuration.

- No Pretrained Encoder

- GloVe Embedding

- BiLSTM Encoder

- BiLSTM + Self-Attention Encoder

- Bi-Encoder (support two encoders for intent and slot, respectively)

- Pretrained Encoder

bert-base-uncasedroberta-basemicrosoft/deberta-v3-base- other hugging-face supported encoder model...

- DCA Net Interaction

- Stack Propagation Interaction

- Bi-Model Interaction(with decoder/without decoder)

- Slot Gated Interaction

All classifier support Token-level Intent and Sentence-level intent. What's more, our decode function supports to both Single-Intent and Multi-Intent.

- LinearClassifier

- AutoregressiveLSTMClassifier

- MLPClassifier

We implement various 10 common-used SLU baselines:

Single-Intent Model

- Bi-Model [ Wang et al., 2018 ] :

bi-model.yaml

- Slot-Gated [ Goo et al., 2018 ] :

slot-gated.yaml

- Stack-Propagation [ Qin et al., 2019 ] :

stack-propagation.yaml

- Joint Bert [ Chen et al., 2019 ] :

joint-bert.yaml

- RoBERTa [ Liu et al., 2019 ] :

roberta.yaml

- ELECTRA [ Clark et al., 2020 ] :

electra.yaml

- DCA-Net [ Qin et al., 2021 ] :

dca_net.yaml

- DeBERTa [ He et al., 2021 ] :

deberta.yaml

| Model | ATIS | SNIPS | ||||

|---|---|---|---|---|---|---|

| Slot F1.(%) | Intent Acc.(%) | EMA(%) | Slot F1.(%) | Intent Acc.(%) | EMA(%) | |

| Non-Pretrained Models | ||||||

| Slot Gated [Goo et al., 2018] | 94.7 | 94.5 | 82.5 | 93.2 | 97.6 | 85.1 |

| Bi-Model [Wang et al., 2018] | 95.2 | 96.2 | 85.6 | 93.1 | 97.6 | 84.1 |

| Stack Propagation [Qin et al., 2019] | 95.4 | 96.9 | 85.9 | 94.6 | 97.9 | 87.1 |

| DCA Net [Qin et al., 2021] | 95.9 | 97.3 | 87.6 | 94.3 | 98.1 | 87.3 |

| Pretrained Models | ||||||

| Joint BERT [Chen et al., 2019] | 95.8 | 97.9 | 88.6 | 96.4 | 98.4 | 91.9 |

| RoBERTa [Liu et al., 2019] | 95.8 | 97.8 | 88.1 | 95.7 | 98.1 | 90.6 |

| Electra [Clark et al., 2020] | 95.8 | 96.9 | 87.1 | 95.7 | 98.3 | 90.1 |

| DeBERTav3[He et al., 2021] | 95.8 | 97.8 | 88.4 | 97.0 | 98.4 | 92.7 |

Multi-Intent Model

- AGIF [ Qin et al., 2020 ] :

agif.yaml

- GL-GIN [ Qin et al., 2021 ] :

gl-gin.yaml

| Model | Mix-ATIS | Mix-SNIPS | ||||||

|---|---|---|---|---|---|---|---|---|

| Slot F1.(%) | Intent F1.(%) | Intent Acc.(%) | EMA(%) | Slot F1.(%) | Intent F1.(%) | Intent Acc.(%) | EMA(%) | |

| Non-Pretrained Models | ||||||||

| Vanilla Multi Task Framework | 85.7 | 80.8 | 75.4 | 36.4 | 92.7 | 98.3 | 96.0 | 70.2 |

| AGIF [Qin et al., 2020] | 86.9 | 80.0 | 72.7 | 39.5 | 94.4 | 97.4 | 93.7 | 74.8 |

| GL-GIN [Qin et al., 2021] | 86.3 | 81.1 | 77.1 | 43.6 | 94.2 | 98.5 | 96.1 | 74.9 |

* NOTE: Due to some stochastic factors(e.g., GPU and environment), it maybe need to slightly tune the hyper-parameters using grid search to obtain better results.

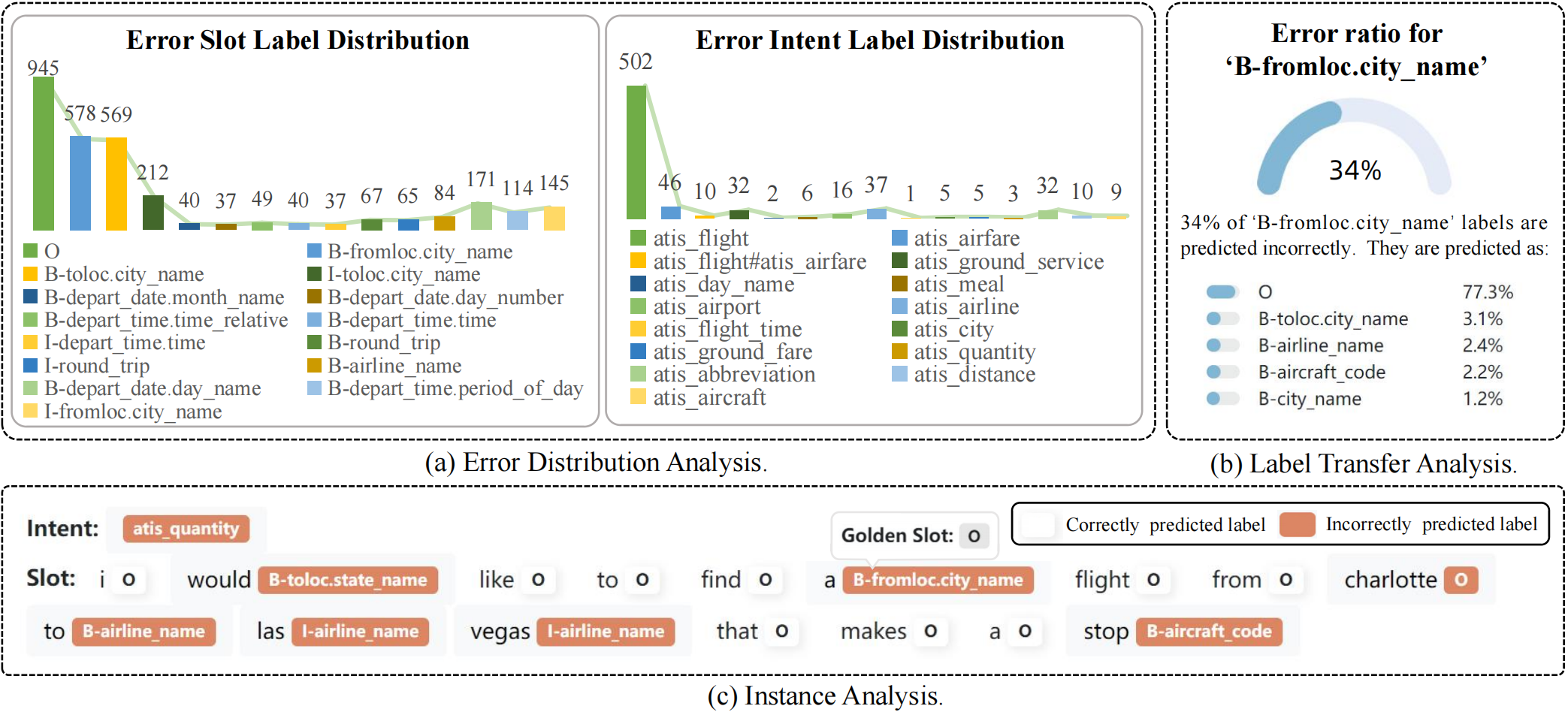

Model metrics tests alone no longer adequately reflect the model's performance. To help researchers further improve their models, we provide a tool for visual error analysis.

We provide an analysis interface with three main parts:

- (a) error distribution analysis;

- (b) label transfer analysis;

- (c) instance analysis.

python tools/visualization.py \

--config_path config/visual.yaml \

--output_path {ckpt_dir}/outputs.jsonlVisualization configuration can be set as below:

host: 127.0.0.1

port: 7861

is_push_to_public: true # whether to push to gradio platform(public network)

output_path: save/stack/outputs.jsonl # output prediction file path

page-size: 2 # the number of instances of each page in instance anlysis. We provide an script to deploy your model automatically. You are only needed to run the command as below to deploy your own model:

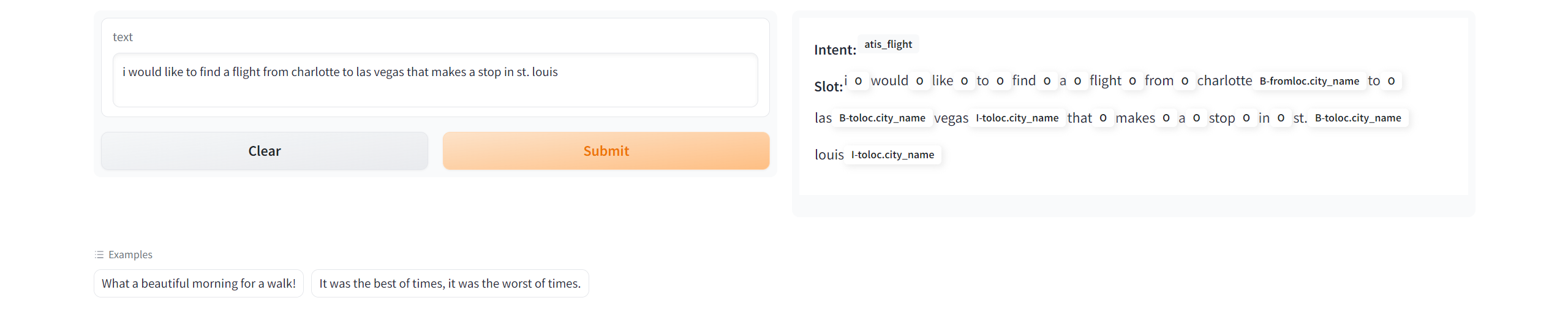

python app.py --config_path config/examples/from_pretrained.yamlNOTE: Please set logger_type to local if you do not want to login with wandb for deployment.

We also offer an script to transfer models trained by OpenSLU to hugging face format automatically. And you can upload the model to your Model space.

python tools/parse_to_hugging_face.py -cp config/reproduction/atis/bi-model.yaml -op save/tempIt will generate 5 files, and you should only need to upload config.json, pytorch_model.bin and tokenizer.pkl.

After that, others can reproduction your model just by adjust _from_pretrained_ parameters in Configuration.

Please create Github issues here or email Libo Qin or Qiguang Chen if you have any questions or suggestions.